Utility Construction from ToM Reports

For one incoming proposal, the decision maker may receive item multiset I_recv and give item multiset I_give.

Item utilities are read from the decision maker's own reported ToM dictionaries.

\[ G_k=\sum_{i\in I_{\mathrm{recv}}} v_i^{(k)},\qquad L_k=\sum_{i\in I_{\mathrm{give}}} v_i^{(k)} \]

\[ U^{(0)}=G_0-\rho L_0 \]

\[ U^{(0,1)}=U^{(0)}+w_1\!\left(G_1-\gamma L_1\right) \]

\[ U^{(0,1,2)}=U^{(0,1)}+w_2\!\left(G_2-\kappa L_2\right) \]

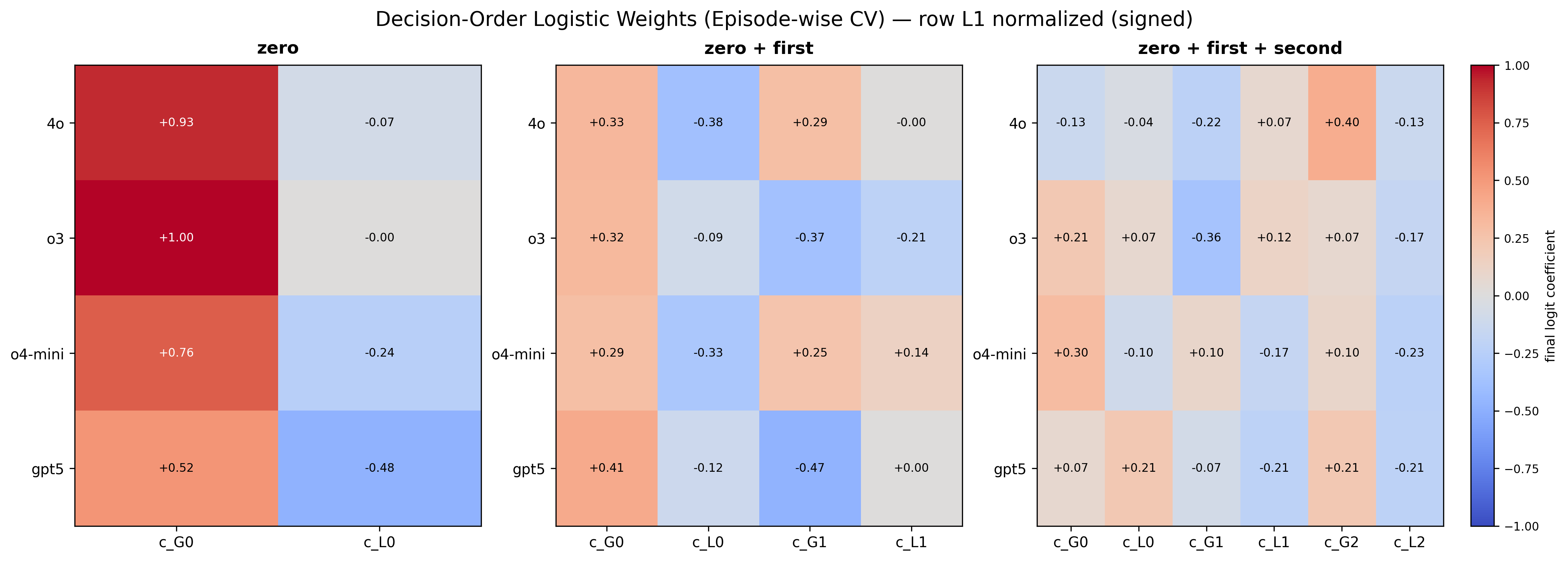

Higher-order utilities are added progressively from zero-order to second-order ToM terms.

Here Gk is perceived gain and Lk is perceived loss under ToM order k. Higher-order terms are progressively added.

Important: this is a behavioral approximation model. It is used to explain observed decisions, not to claim an internal mechanistic decomposition of the LLM.